The AI Network Is The Computer of the Future

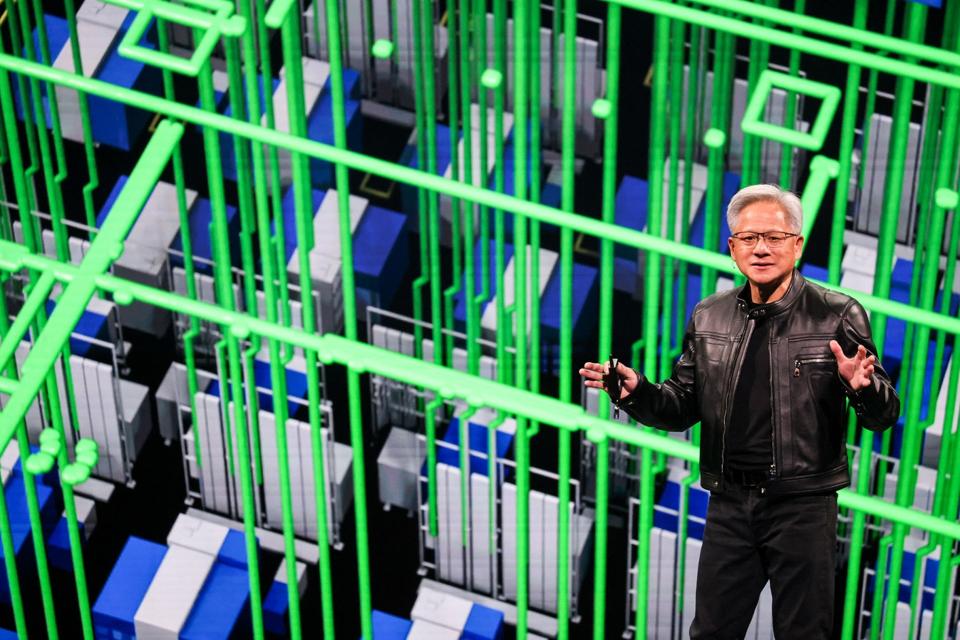

Nvidia, by reclaiming its title as the world’s most valuable company, is now focused on defining and developing a worldwide network of AI processing units. The company’s success and its ability to maintain its top position depend on this vision. In this news report from Karina Web, we will delve into the details of this approach.

In a conversation with Bob Metcalfe, the inventor of Ethernet, Jensen Huang, Nvidia’s co-founder and CEO, described the future of computer applications as one where “part of the application runs in the data center, another part in a data center at the edge, and another part in an autonomous machine roaming around the world.”

Five years ago, Huang viewed data centers as a “composable disaggregated infrastructure,” where the critical path is the interaction of one “computing node” with another “computing node” over the Ethernet network. Metcalfe responded by asking, “Is this why you bought Mellanox?” and Huang replied, “It is exactly the reason why I bought Mellanox.” He added: “Understanding the direction of software inspires you about what’s the best way to design and evolve hardware.” In other words, by anticipating how applications will be developed and run in the future, Nvidia has added new hardware elements to its portfolio to offer customers faster, more efficient, more resilient, and less expensive shuttling of data inside and outside the data center.

Founded in Israel in 1999, Mellanox initially focused on developing computer networking products based on the then-new InfiniBand standard. These products featured high throughput and low latency, ensuring fast data movement between computing nodes. Mellanox later added networking products based on the Ethernet standard and was acquired by Nvidia for $6.9 billion in 2019.

The Spectrum-X Platform and the Importance of the Network in AI

Kevin Deierling, Nvidia’s senior vice president of networking, explains that the unique nature of data processing for AI makes the network’s capabilities critical. While cloud computing serves millions of users, each transferring a small amount of unsynchronized data, AI and Nvidia’s GPUs do things in parallel. Deierling says, “With AI workloads, we have enormous, what we call ‘elephant data flows,’ that are synchronized.” Each of the vast number of AI computing nodes operates on its part of the data and then shares all the data it’s processed with the other nodes, which results in extremely bursty traffic.

The second trend driving the need for Spectrum-X‘s capabilities is the shift in the focus of AI projects. Until recently, AI work mainly involved “training,” feeding an AI model vast amounts of data to learn patterns and relationships. However, enterprises are now moving to “inference,” or using the trained model to process new data, make predictions, or take action.

With inferencing, many customers share the same network infrastructure, increasing performance expectations and requirements. The Spectrum-X platform addresses these by bringing InfiniBand’s high-performance bandwidth and latency specifications to Ethernet. The significant benefit of using Ethernet to connect all components of the AI infrastructure, from the data storage unit to the data processing units, is that it is a widely deployed and familiar standard for the many customers now investing in AI.

Deierling reports that enterprises are rapidly adopting AI agents and adapting them by adding their proprietary data to a trained model. He says, “The next wave we’re starting to see is physical AI, edge applications, and robotics,” where Ethernet connects everything from the cloud to enterprise data centers and mobile and stationary sensors.

“The Network is the Computer” was the 1984 tagline for Sun Microsystems, a maker of “workstations” or networked desktop computers. Four decades later, with AI constituting “a new way of writing software,” everyone would want to be a coder, writing applications for the composable disaggregated infrastructure developed and maintained by Nvidia and its partners. Huang concluded, “We found ourselves at the right place at the right time. Part insight, part strategy, part serendipity.”

Source: forbes.com